When it comes to creating an analytics engine such as Google Analytics, measuring the speed performance of all websites that currently exist can be a pain. There are countless factors someone need to consider.

This is one of the reasons that both humans and bots cannot accurately measure the speed performance of a website.

We can only follow some best practices that we are certain they will improve the speed performance.

First of all, we need to accurately identify the problem to provide a suitable answer to this question. We had to do some digging to discover how many websites exist as of 2020. We were surprised to see that there are 1.8 billion websites. Plus those websites can have from 1 to some millions internal links.

If PageSpeed Insights takes 10 seconds for each link (average), you can imagine it is fairly impossible to get all this information using any PageSpeed checker, just to handle only the homepage of each website.

If you are wondering about the math behind this, it would take 570 years for a single instance of PSI to process just the homepages of all websites.

How can someone efficiently scan for every active link currently on the Web in regard of measuring the speed performance?

The idea behind how google resolves this problem is simple. Lots of users already joining your website using Google Chrome. They already have the answer the minute they fully load a link of your website.

Wouldn’t you have done the same if you owned Google Search engine & Google Chrome with a 70% Global adoption?

Furthermore, this is the most accurate data you can get. It is always about improving the experience of your website visitors.

Despite accuracy, this is also one of the most efficient ways to resolve a problem like this.

Additional information can also be found on the following article @moz.com by Tom Anthony who wrote about this back in 2018 after a brief discussion with Google’s John Mueller.

I’ve seen many website owners trying to increase the speed of a website and get their speed rankings by sacrificing user experience in this process.

Well… Don’t!

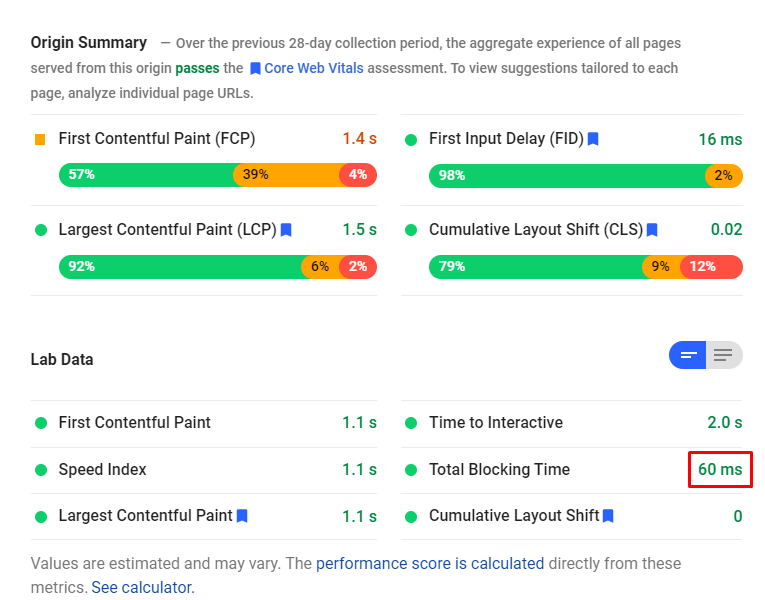

The KPIs provided by Google Pagespeed Insights, GTMetrix, or IMGHaste are only indicators on how to improve your speed performance so you can improve the user experience overall. If you follow those by all means necessary and sacrifice the user experience in the process, you might end up doing more harm than actual improvement. The Ultimate KPIs are the true goals of your website, not the speed performance.

This can lead to losing conversions, sales, leads, goals etc.

Most important, is that all the above means that you can make changes to your website that Googlebot or any other is incapable of detecting or understanding.

However, this doesn’t mean your real visitors won’t figure this out and report it back to Google.

For example we know for a fact that Googlebot doesn’t support HTTP/2 but Google will be able to see the improvements that come from deploying HTTP/2 to your users.

The same applies when you have a Service Worker as your Image Service with imghaste. Googlebot wouldn’t be aware, but users will.

This means you practically don’t need to worry about PageSpeed Insights or GTMetrix rather than focusing on improving things for your visitors.